Our forums are full of helpful information and Streamlit experts. Was this page helpful? thumb_upYes thumb_downNo edit Suggest edits forum Still have questions? If everything worked out (and you used the example table we created above), your app should look like this: Watch out: If your database updates more frequently, you should adapt ttl or remove caching so viewers always see the latest data. With st.experimental_memo, it only runs when the query changes or after 10 minutes (that's what ttl is for). See st.experimental_memo above? Without it, Streamlit would run the query every time the app reruns (e.g. Rows = run_query("SELECT * from mytable ") ACID-compliant, it supports foreign keys, joins. A host, e.g., an EC2 instance, where you will run the. # Uses st.experimental_memo to only rerun when the query changes or after 10 run_query(query): PostgreSQL (Postgres) is an open source object-relational database known for reliability and data integrity. An AWS account with a PostgreSQL AWS Aurora database and permissions to create and attach IAM policies. # Uses st.experimental_singleton to only run init_connection(): Azure Postgresql, CloudSQL, Amazon RDS, you should use keepalivesidle in. But you can host your PostgreSQL on an EC2. You may need to update your Postgres nf to add the airflow user to.

NOTE: Aurora does not have a free quota and therefore you will have to pay for its usage. You can host it on Aurora which will enhance the benefits of having a serverless backend because AWS manages Aurora and it will scale automatically. Make sure to adapt query to use the name of your table. To get a PostgreSQL database let us create one on AWS. Amazon Aurora makes it easier, faster, and cost-effective to. We will do this using Amazon Relational Database Service (Amazon RDS) and everything done in this tutorial is Free Tier eligible. It combines the performance and availability of a commercial grade database with the simplicity and cost-effectiveness of an open source database. In this tutorial, you will learn how to create an environment to run your PostgreSQL database (we call this environment an instance ), connect to the database, and delete the DB instance.

Amazon Aurora is a MySQL and PostgreSQL compatible relational database built for the cloud. Here we learned to read data from PostgreSQL in Pyspark.Copy the code below to your Streamlit app and run it. Amazon Aurora: Learning series introduction. Note: if you cannot read the data from the table, you need to check your privileges on the Postgres database to the user. We will print the top 5 rows from the dataframe as shown below. Here we are going to read the content of the table as a dataframe. Here we will read the schema of the stored table as a dataframe, as shown below. option("user", "hduser").option("password", "bigdata").load() option("driver", "").option("dbtable", "drivers_data") \ To read the data frame, we will read() method through the JDBC URL and provide the PostgreSQL jar Driver pathĭf = ("jdbc").option("url", "jdbc:postgresql://localhost:5432/dezyre_new") \ Here we are going to read the data table from PostgreSQL and create the DataFrames. master("local").appName("PySpark_Postgres_test").getOrCreate() DB Security Groups are only needed when the EC2-Classic Platform is supported.

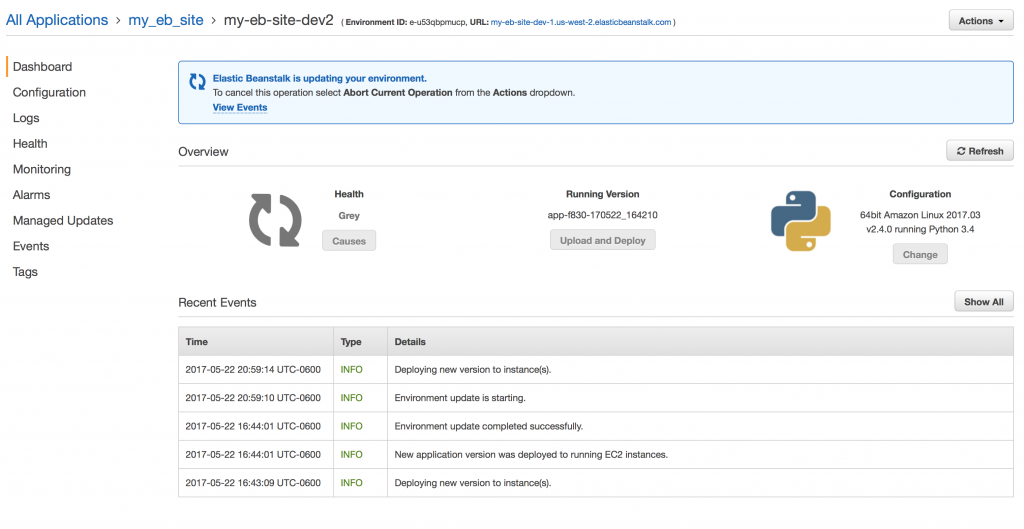

Step 4 If your account was like mine you see this text: Your account does not support the EC2-Classic Platform in this region. In this scenario, we are going to import the pyspark and pyspark SQL modules and create a spark session as below: Step 1 You are getting the same dialog I was seeing above. Step 4: To view the content of the table.Recipe Objective: How to read data from PostgreSQL in Pyspark?.